Generative AI in cybersecurity: Uses, benefits, and risks

Security teams process large volumes of alerts and logs, making manual analysis difficult to scale. Generative AI helps summarize and contextualize this data and supports incident response.

While it can improve efficiency and reduce analysis time, it also introduces risks, both in how organizations deploy these systems and how attackers may use them to create and scale threats.

This guide covers how generative AI is used in cybersecurity, along with its benefits and risks.

What is generative AI in cybersecurity?

Generative AI in cybersecurity refers to systems that process security information and generate outputs such as summaries, code, search queries, response guidance, or simulated scenarios.

These systems are typically built on architectures such as:

- Large language models (LLMs): Interpret and explain security data, including logs, alerts, and incident details.

- Generative adversarial networks (GANs): Generate synthetic data (artificial data based on real patterns) or simulate attack scenarios for testing and training.

In practice, generative AI is integrated with logs, alerts, incident data, policies, and threat intelligence. It can work with both structured data (such as logs) and unstructured data (such as text), allowing it to generate outputs that help teams understand and respond to security events.

How it works in a security context

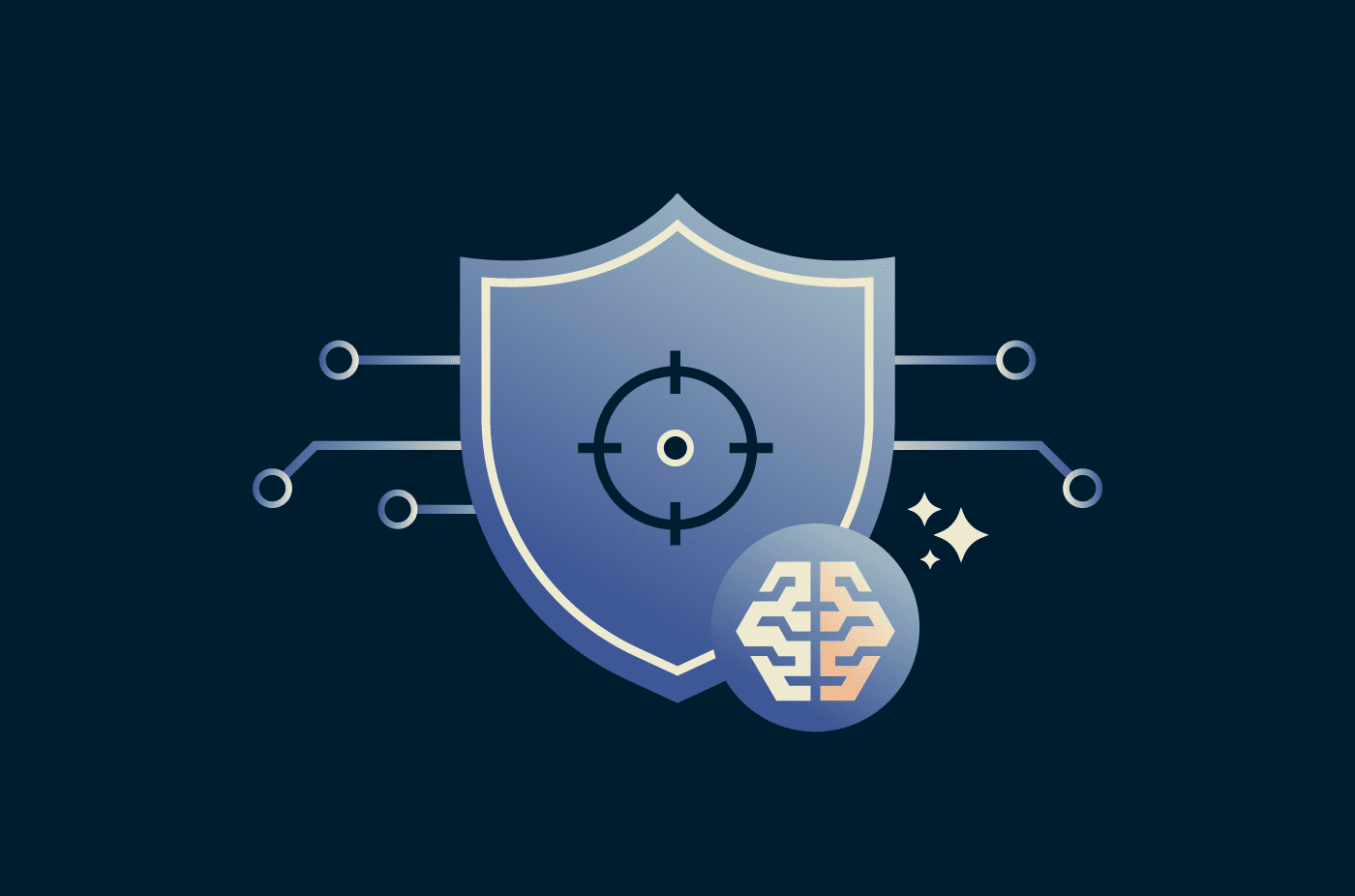

Generative AI systems are trained on large datasets from multiple sources. During use, they take input (e.g., a suspicious log entry) and generate outputs such as summaries or response suggestions based on patterns learned during training.

Some implementations also use retrieval-augmented generation (RAG), which retrieves relevant data from connected tools in response to a request. This can help keep responses aligned with current data rather than relying solely on training data.

How it differs from traditional AI in cybersecurity

Traditional AI systems focus on detecting patterns and classifying activity, such as identifying malware or flagging behavior that deviates from a baseline. These systems typically produce alerts, risk scores, or classifications that indicate whether activity is suspicious.

Generative AI complements this by producing outputs that help analysts interpret and act on security data. The key differences are outlined below:

| Aspect | Traditional AI in cybersecurity | Generative AI in cybersecurity |

| Primary role | Detects anomalies or classifies activity | Generates outputs to support investigation and response |

| Output type | Alerts, scores, or labels (e.g., malicious or benign) | Summaries, queries, detection rules, and response steps |

| Data handling | Uses structured telemetry and labeled data | Processes structured and unstructured data |

| Interaction style | Uses predefined queries or workflows | Supports natural-language interaction |

| Analyst effort | Requires analysts to interpret alerts and define next steps | Supports interpretation and suggests next steps |

Key use cases and benefits of generative AI in cybersecurity

Beyond alerting, generative AI can help surface and summarize related events, generate explanations of findings, and support investigation workflows by working with data from existing security systems. These use cases span several areas:

Core security operations

In core security operations, generative AI works with logs, alerts, and other system data to help analysts organize information, summarize incidents, and move through investigation workflows more efficiently. This includes:

- Investigation summary: Converts raw alerts and logs into a clear summary of the incident, including key systems, users, and timestamps.

- Identity and access tracking: Surfaces unusual privilege changes, repeated access failures, and role misuse.

- Data and tool unification: Presents relevant data from endpoints, cloud tools, and identity systems in a single view.

It can also draw on internal playbooks, past incidents, and security policies to surface relevant guidance during investigations. This helps analysts apply consistent workflows without having to search across multiple systems.

Threat and fraud defense

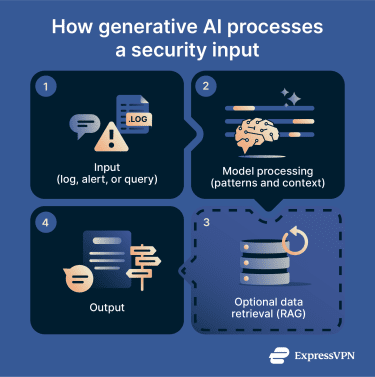

In threat and fraud defense, generative AI draws on data from multiple systems to help analysts connect related activity, understand how incidents unfold across stages, and interpret patterns that may not be obvious from individual alerts. This includes:

- Attack progression mapping: Illustrates how an event moves from initial access to persistence and lateral movement across systems.

- Multi-stage correlation: Reveals coordinated activity across systems and over time that may not appear suspicious on its own.

- Intent inference: Highlights patterns that may indicate goals such as persistence, data access, or privilege escalation.

In addition to helping identify coordinated activity across systems, it can surface comparable past cases, helping analysts refine detection logic and apply more targeted responses.

In addition to helping identify coordinated activity across systems, it can surface comparable past cases, helping analysts refine detection logic and apply more targeted responses.

Security readiness and testing support

Traditional approaches, such as static test cases and initial threat modeling, have limitations. Generative AI can support security readiness by generating variations of attack techniques and environment-specific scenarios, helping teams test how controls respond under different conditions. This includes:

- Technique variation: Produces multiple versions of attack methods across different systems and configurations.

- Environment-specific scenarios: Creates simulations that reflect the environment’s systems, configurations, and controls.

- Execution paths: Shows how attacks may adapt when controls block an initial approach.

Beyond simulation, generative AI can help identify gaps in how controls perform under different conditions. Because these simulations can be updated with current system data and prior results, they support ongoing validation rather than one-off exercises.

It also supports more realistic training by introducing scenarios where analysts must make decisions with incomplete or conflicting information, reflecting real-world uncertainty.

Risks and challenges of generative AI in cybersecurity

Generative AI introduces a range of risks and challenges in cybersecurity. These include both how attackers use generative AI to scale and adapt threats and the risks organizations face when deploying these systems.

The impact varies depending on how systems are designed, configured, and used, and in many cases can be reduced through appropriate controls and oversight.

AI-generated cyberattacks

Generative AI lowers the effort needed to create convincing cyberattacks. Attackers require less technical expertise to produce AI-generated phishing emails, fake websites, malicious scripts, or targeted messages.

These attacks can appear more polished than earlier campaigns, with natural language and content that can be tailored to a target’s role, location, or recent activity. This includes:

- Malware modification: Rewrites or obfuscates malicious code to evade signature-based detection.

- Script generation: Produces PowerShell, Python, or shell scripts for credential theft, persistence, or information gathering.

- Reconnaissance support: Summarizes publicly available information about employees, suppliers, or systems to tailor attacks.

- Email variation: Produces variations of the same message, reducing the effectiveness of pattern-based filtering.

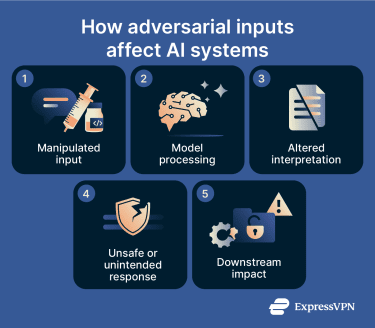

AI model manipulation and adversarial attacks

Generative AI systems can produce unsafe or misleading results when inputs or external data influence how the system interprets context. This may lead to responses that bypass safeguards, expose restricted information, or evade controls.

This risk increases when models have access to internal systems, external data sources, or automated workflows. Common attack techniques include:

- Prompt injection: Inputs designed to override system instructions or cause the model to expose sensitive data or perform unintended actions.

- Data poisoning: Malicious or manipulated data that influences outputs, especially when the system retrieves external content or relies on connected knowledge sources.

- Context manipulation: Inputs shaped over multiple interactions to steer the model toward unsafe or misleading responses.

- Evasion techniques: Inputs are subtly modified to avoid detection, causing the model to misinterpret potentially harmful content as safe or normal.

When connected to plugins, APIs, or tools such as databases, ticketing systems, or security platforms, compromised inputs can affect responses or, in some cases, trigger actions across those integrations.

Data privacy and leakage risks

Generative AI tools often require access to large volumes of data to function effectively. If organizations send sensitive data to public or third-party AI systems, they may risk exposing internal documents, customer records, credentials, or source code.

This risk increases when consumer AI tools are used without clear data handling policies or visibility into how information is stored, used, or retained. This can lead to potential data leakage through:

- Sensitive prompts: Confidential emails, contracts, or incident details may be entered into AI tools.

- Insecure integrations: Connected systems may expose logs, tickets, or documents containing confidential information.

- Weak access controls: Users may gain access to AI-generated outputs containing data they aren’t authorized to access.

- Third-party retention: External AI providers may retain prompts or outputs longer than intended.

Organizations also face legal and compliance risks when AI systems process sensitive or regulated data. Privacy laws, contractual obligations, and industry requirements may limit how personal information is stored, transferred, or analyzed using AI services.

Deepfakes and identity fraud

Generative AI makes it easier to create realistic audio, video, and images that imitate real people. These deepfakes can be used to impersonate executives, employees, or public figures, often with a higher degree of realism than earlier methods.

These techniques are used in fraud and impersonation scenarios, where convincing audio or visual content is used to influence decisions or bypass verification processes. This includes:

| Deepfake type | Typical use | Risk |

| Voice cloning | Impersonating executives or coworkers in calls | Unauthorized payments or disclosure of information |

| Manipulated video | Impersonation in meetings or recorded messages | Social engineering and reputational damage |

| Synthetic images | Creating fake IDs, profile photos, or evidence | Identity fraud and account creation abuse |

Overreliance on automated systems

Generative AI can speed up analysis, but it may also create a false sense of confidence. This can lead to:

- Outputs being accepted without independent verification.

- Missing context, which may lead to incomplete conclusions.

- Suggested actions that may not fully address the issue or may introduce new risks.

- Low-confidence outputs or conflicting signals being overlooked.

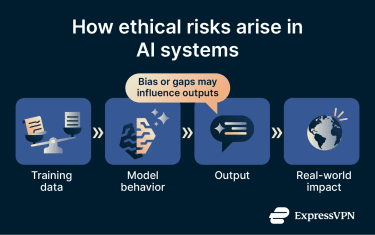

Ethical and regulatory considerations

Generative AI raises ethical concerns around fairness, transparency, and accountability.

If a model produces biased results or harmful recommendations, organizations need to understand who is responsible and how the output was generated.

This becomes more complex when the model’s decision-making process isn’t easily interpretable, making it harder to explain how a particular output was generated.

Bias can also arise when training data reflects historical inequalities or incomplete information. In security settings, this may lead to unfair treatment of users, inaccurate risk scoring, or inconsistent investigation outcomes.

Best practices for implementing generative AI in cybersecurity

To manage risk and use generative AI effectively, organizations need clear governance, appropriate controls, and ongoing validation. These practices help support safe, controlled, and effective use:

Clear security and governance policies

Organizations should establish policies that define acceptable use, data handling, oversight, and accountability for generative AI. Key controls include:

- Define approved use cases: Specify which tasks teams can perform with generative AI, such as analysis support, documentation, or controlled testing.

- Restrict sensitive data usage: Set rules for handling internal documents, credentials, and regulated data before they’re entered into AI systems. If possible, use an AI model that includes privacy protections or secure enclaves.

- Control access and permissions: Limit who can use AI tools and which systems they can access based on roles and responsibilities.

- Establish audit requirements: Track system use, including prompts, outputs, and resulting actions.

Learn more: How ExpressAI secures your AI chats with secure enclaves.

Output validation and guardrails

Teams need controls to validate outputs before they’re used. This includes:

- Output verification: Compare results with logs, alerts, or system data to confirm accuracy.

- Rule-based constraints: Limit what the system can generate or recommend, especially for sensitive actions.

- High-risk output filtering: Block or flag responses that involve credentials, system changes, or sensitive information.

- Evaluation metrics: Prioritize outputs that align with source context, pass safety checks, and remain consistent with verified data.

Human oversight and accountability

Generative AI supports analysis, but oversight remains important where decisions affect critical systems or access. In these cases, decisions should be reviewed and approved before action is taken.

This is especially important for actions such as credential resets, account suspension, and external reporting. Requiring approval before execution helps reduce the risk of errors and maintain accountability across security operations.

Data quality and security

The quality of data used by or connected to generative AI directly affects the reliability of its outputs. Strong data practices help maintain accuracy while reducing the risk of exposing sensitive information. This includes:

- Use clean, structured data from trusted sources.

- Remove stale or duplicated records that may distort outputs.

- Label data by source and trust level.

- Keep threat intelligence and system data up to date.

Monitoring, testing, and refinement

Generative AI requires ongoing evaluation after deployment to ensure outputs remain accurate and aligned with changing data, systems, and threats. This involves several key practices:

- Accuracy tracking: Compare outputs with validated data and review trends in grounding, safety, and response quality over time.

- Scenario testing: Test the system using past incidents and simulated attack scenarios to identify gaps that may not appear in routine use.

- Input and output auditing: Log prompts, responses, and access activity so teams can review behavior, trace system activity, and investigate errors or misuse.

- Control and data refinement: Adjust guardrails, access, and connected data sources regularly so outputs remain aligned with current systems and threats.

The future of generative AI in cybersecurity

Generative AI is expanding from supporting individual tasks to shaping how security operations are structured. It’s influencing how workflows are designed, decisions are made, and how systems coordinate across environments.

How security operations will evolve with AI

Security operations centers (SOCs) are starting to shift toward workflows where AI handles parts of routine triage and investigation across connected tools, while analysts focus on complex cases. This shifts their role from queue-based triage to exception handling, control tuning, workflow design, and AI performance oversight.

At the same time, organizations are building data foundations that allow AI systems to operate effectively at scale. These platforms aim to unify telemetry, normalize inputs, and provide the context needed to connect events, identities, and systems. This enables several operational changes:

- Continuous, system-led operations: Security workflows can run continuously in the background rather than relying on manual triage cycles.

- Policy-driven orchestration: Systems can use predefined rules to process alerts and take actions across tools without manual input.

- Context-rich decision-making: Analysts and systems evaluate activity using combined context from multiple sources to improve prioritization and response.

- Greater analyst focus: Analysts concentrate on complex investigations and strategic decisions rather than routine processing.

Emerging trends in AI-driven cyber defense

Beyond day-to-day SOC workflows, AI is contributing to broader changes in how security is structured, what systems must be protected, and how organizations collaborate. This leads to several broader changes:

- Security of AI systems: Models, data pipelines, and AI applications are becoming critical assets that require dedicated protection.

- Identity- and data-centric security: Managing access and data flow becomes more important as AI systems rely heavily on both.

- Standardization and interoperability: Shared frameworks and data formats improve coordination across tools and environments.

- Collective defense efforts: Organizations are increasingly sharing threat intelligence to strengthen defenses against emerging AI-related risks.

FAQ: Common questions about generative AI in cybersecurity

Can generative AI help small cybersecurity teams do more with fewer resources?

What industries benefit most from generative AI in cybersecurity?

How can businesses use generative AI without exposing sensitive data?

How do human analysts and generative AI work together in security operations?

What are the limitations of generative AI in cybersecurity?

Take the first step to protect yourself online. Try ExpressVPN risk-free.

Get ExpressVPN